superpowers and stupidity - who gets the leverage?

AI is going to make some people incredibly proficient. It's also going to make a lot of people very stupid.

AI is going to give some superpowers, and it's going to make a lot of people very stupid.

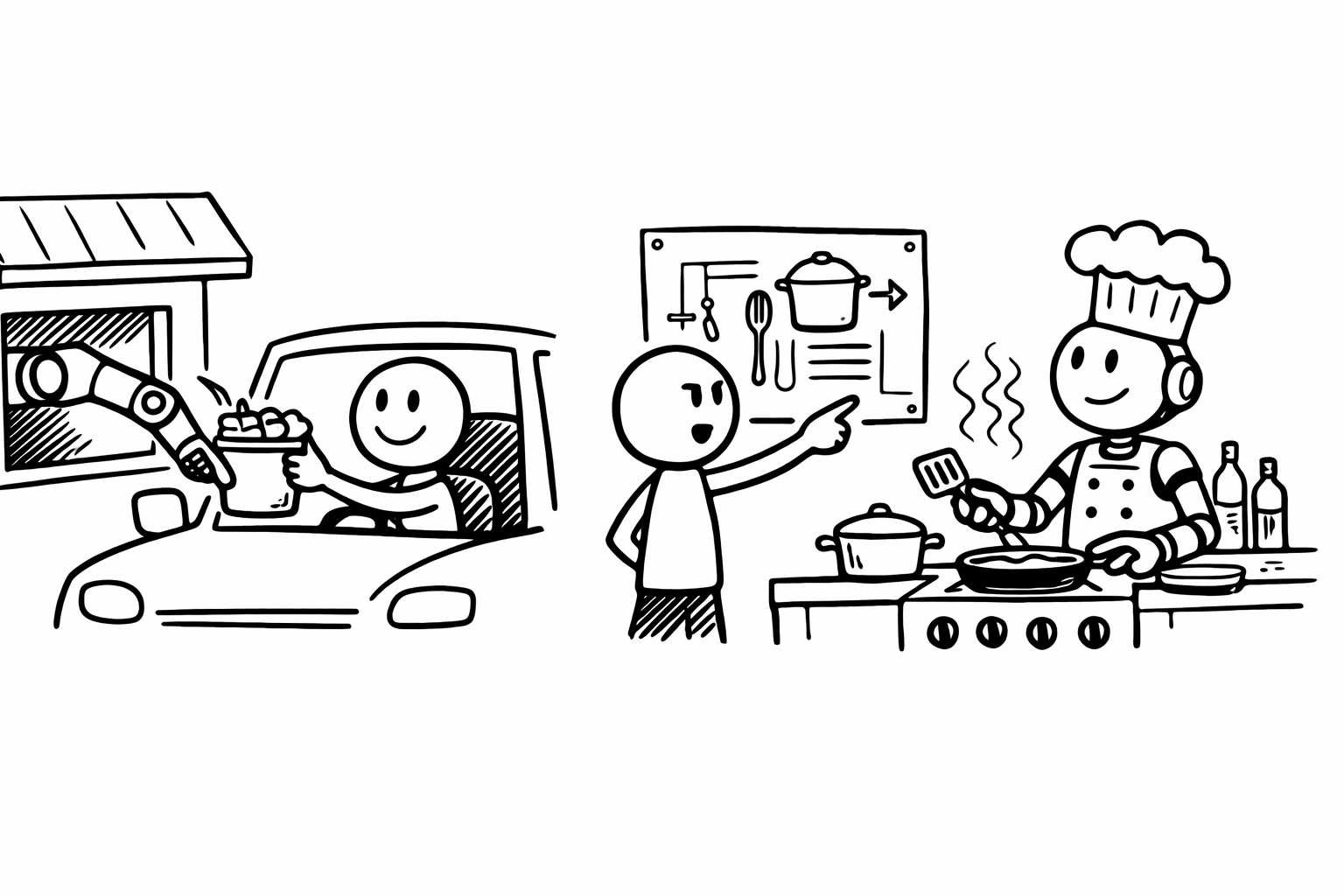

tossing prompts into an LLM is not unlike the KFC drive-through. it feels good in the moment, it saves you time, it's relatively inexpensive and it tastes great. yet, over the long run, we all know how the story ends.

"Code has always been expensive. Producing a few hundred lines of clean, tested code takes most software developers a full day or more. Many of our engineering habits, at both the macro and micro level, are built around this core constraint."

the field of 'software engineering' is currently undergoing a revolution, largely because 'code is no longer expensive to produce'.

"It is hard to communicate how much programming has changed due to AI in the last 2 months: not gradually and over time in the "progress as usual" way, but specifically this last December. There are a number of asterisks but imo coding agents basically didn't work before December and basically work since — the models have significantly higher quality, long-term coherence and tenacity and they can power through large and long tasks, well past enough that it is extremely disruptive to the default programming workflow."

— Andrej Karpathy (see also: wtfhappened2025.com)

code is easy to produce in the same way KFC is easy to eat.

if you care about health and longevity, you will design a solution to your hunger that does not involve KFC.

engineers who care deeply about problem solving, creative solutions, systems thinking - they are going to use these tools to do incredible work.

see this talk here:

i think Simon Willison is one of the most interesting examples of leveraging AI to be more productive and efficient. playing the long-term game, leveraging increasing underlying intelligence to augment and scale ones current capabilities.

"I think of vibe coding using its original definition of coding where you pay no attention to the code at all, which today is often associated with non-programmers using LLMs to write code. Agentic Engineering represents the other end of the scale: professional software engineers using coding agents to improve and accelerate their work by amplifying their existing expertise."

understanding what's possible and what isn't possible. understanding problem space — how to connect seemingly different concepts, ideas, systems to create something new and interesting and useful.

"Knowing that something is theoretically possible is not the same as having seen it done for yourself."

without taking a position in the ai creativity debate, Deutsch makes a convincing argument for human creativity.

each organism survives and reproduces, moving to adjacent squares in a maze. but human creativity is fundamentally different. we traverse idea-space through conjecture, not gradient descent. the example he uses (that i find most useful) is 'asteroid deflection'. there's no evolutionary pressure that would push humans to develop 'asteroid deflection'. but we humans can think about asteroids, understand physics and gravity, and design technology to prevent one from destroying the earth.

if it's true that humans have this unique characteristic, whether through evolutionary pressure, or culture, or other - then it's likely that the humans who will have the most leverage are the ones who can actually think and understand the space of ideas deeply enough to conjecture, to connect things that don't obviously connect, and to direct these increasingly powerful tools toward problems that matter.

no shade on KFC, but it likely won't help us deflect asteroids.