Bending the Slope of Slop

the proportion of valuable and insightful output needs to grow faster, or at least as fast as, the slop.

successful buzzwords are not invented out of thin air. they instead identify common experiences which we all have and can relate to. giving these experiences a name crystallizes these ideas and experiences and makes them communal. that's the power of a buzzword. and when these things snap into focus, they create community, change culture, and influence our work.

'slop' has clearly crossed some threshold of formal recognition - it's now listed in Cambridge, Macquarie. Merriam-Webster decided it was their 'word of the year' for 2025.

unfortunately, the definition they landed on was

digital content of low quality that is produced usually in quantity by means of artificial intelligence

the problem with this definition, is that 'slop' cannot be blamed entirely on AI. do we have any right to pass the buck at all? humans are the initiators, AI just turbo-charges the slop machine.

it doesn't take AI to be low-quality, inauthentic or inaccurate. Any human or AI can be an agent of slop.

— swyx, AIE Talk

and from the article:

plenty of Slop has been made by humans, both well-meaning and cynical, for centuries — AI does not have a monopoly on making Slop. However, AI does make it easy to scale thoughtless output and it is harder to signal intent, effort and quality

'slop' is an existentially important term because it points directly at the crux of a systemic problem - the growth of slop, unchecked and unbalanced, without an equal growth of quality. This is a collective tax with a not-so-nice trajectory.

an important nuance here is that 'slop bad' is lazy framing.

Any experienced creator will tell you that there can be very little relationship between effort and result - the thing you slaved on for a month gets overdone and incoherent, whereas the throwaway tweet of frustration done in 3 seconds gets viral and used as an example for decades to come. Sometimes -you- can't tell what's slop, sometimes one man's slop is another man's kino

so if we accept (a) 'slop' is a perennial challenge, and (b) 'one man's slop could be another mans kino', then the way we confront this challenge is not by attempting to stop the slop train entirely. if your solution to the slop machine is just to produce less, you're not going to help the ratio. the slop will go on.

Humanity slowly starves to death gorging on its own excrement spewed from artificial intestines

rather, we should ensure we shift the balance - the proportion of valuable and insightful output needs to grow faster, or at least as fast as, the slop.

this is why i fucking love the metaphor bending the slope of slop.

bending the slope of slop

it seems simple and painfully obvious in hindsight as i write, but that's the thing about good observations - they're often simple and painfully obvious in hindsight. 'bend the slope of slop' extends and addresses 'slop', and it crystallizes something i'm sure a lot of folks can relate to.

barely a day goes by where i don't grapple with the quality/quantity tension.

any act of creation and exercise of free will is subject to the quality-quantity tradeoff, whether you're building a new product or texting your adult kids.

Twitter can become a great source of anxiety if one is not careful. the pace at which underlying intelligence is improving, and the pace at which people are leveraging this intelligence and these tools to be more "productive?" is equally anxiety-inducing.

Boris Cherney sharing his workflow of 19 parallel claude codes, wrapped in while loops (video), autonomously scoping, testing and shipping code - this made me feel slightly ill if i'm being honest. specifically, the part where he referred to his workflow as a "simple setup".

i know the underlying intelligence is increasing. and i (like everyone else), am actively avoiding being bent over by the bitter lesson.

i do hope things trend in this direction, as described by Nato Lambert in his recent "get good at agents" post.

I don't yet know what to prescribe myself, but I know the direction to go, and I know that searching is my job. It seems like the direction will involve working less, spending more time cultivating peace, so the brain can do its best directing — let the agents do most of the hard work.

i'm not convinced 'working less' is coming anytime soon - but I definitely agree it's a wise time to ask harder questions about where we point our limited bandwidth, and how we leverage these tools to build in a more sustainable way.

i feel like deep down we all know there's a middle ground somewhere. some way to leverage this intelligence thoughtfully. a way to carefully curate and compound and scale effort in a way that's - not stopping the slop, not slopping the slop, but harnessing the slop for the greater good.

this is why the 'slope of slop' just slaps so well - it's simple, and it's the perfect mental model for the middle ground.

AIE and Latent Space

i find the surrounding context of this observation and this article particularly interesting because swyx and Latent Space and AIE are actively grappling with the slop machine at scale.

The common thread among AIE and LS is that we have to curate very well, and then scale one person's curation to many, hopefully by crowdsourcing but also taking audience/community feedback very seriously .. We just want to Make Good Shit at Scale.

i attended AIE last year in SF, i've binged the Latent Space pod, and i can safely say it is some of the highest signal, "goodest-shit-at-scale" on the internet.

if you're not familiar with AIE or Latent Space, you should read the original article, and go and look at the curated lists of speakers from AIE and guests on LS.

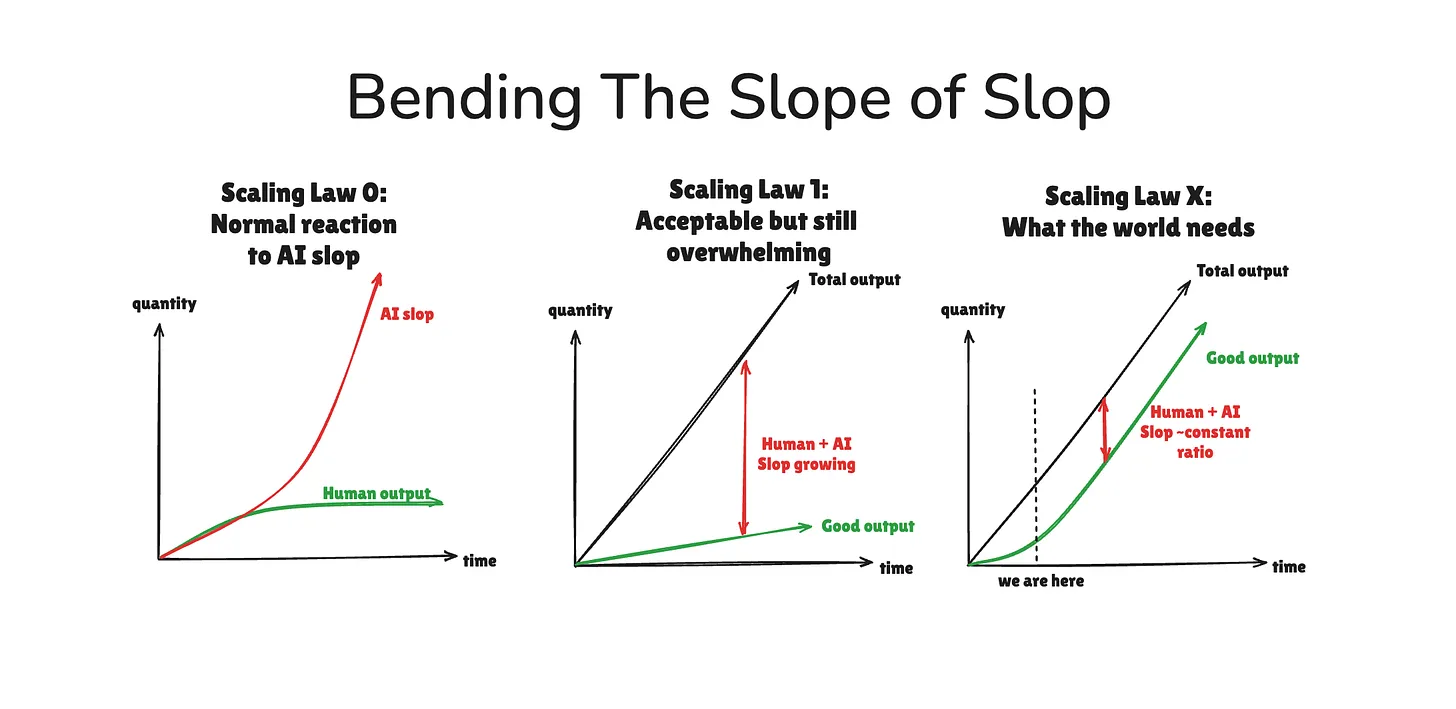

in swyx's article, he diagrams 'bending the slope of slop'

scaling law 0 - you do nothing, slop goes exponential, you lose.

scaling law 1 - you try - scaling output grows, good output grows, but the gap widens

scaling law 'X' - total output grows, but the good output bends upward.

if i'm understanding correctly - the 'bending' part means shifting the balance, not aiming to eliminate slop entirely. but ensuring the proportion of valuable, insightful, durable output grows at a faster rate.

aka "making good shit at scale".

bending the individual slop-slope

i'm aware that swyx's slop-slope metaphor is being discussed in terms of curation quality at scale for communities and media empires - like AIE and Latent Space.

but i'm certain this metaphor can (and should) be transferred to the individual. any individual who wishes to scale their work in a more durable and thoughtful direction. not stopping the slop, not slopping the slop, but harnessing the slop.

if you're an engineer, it's obvious that the syntax, zero-to-one of writing code is gone. anyone can slop-up a working application or feature with little more than a $20 subscription.

if you're a writer/researcher, you can now 'generate' 100 articles in as many minutes. if you are at the savvy end of the AI-literate spectrum, you can do this quite efficiently and effectively. maybe you feed in many of your writing examples, and spin off many sub-agents to do thorough research on 'x' topic.

in the short-term, your slope looks good. you're leveraging the slop machine.

but regardless of how good the underlying intelligence becomes, there will come an inflection point where allocating intelligence no longer yields the same results.

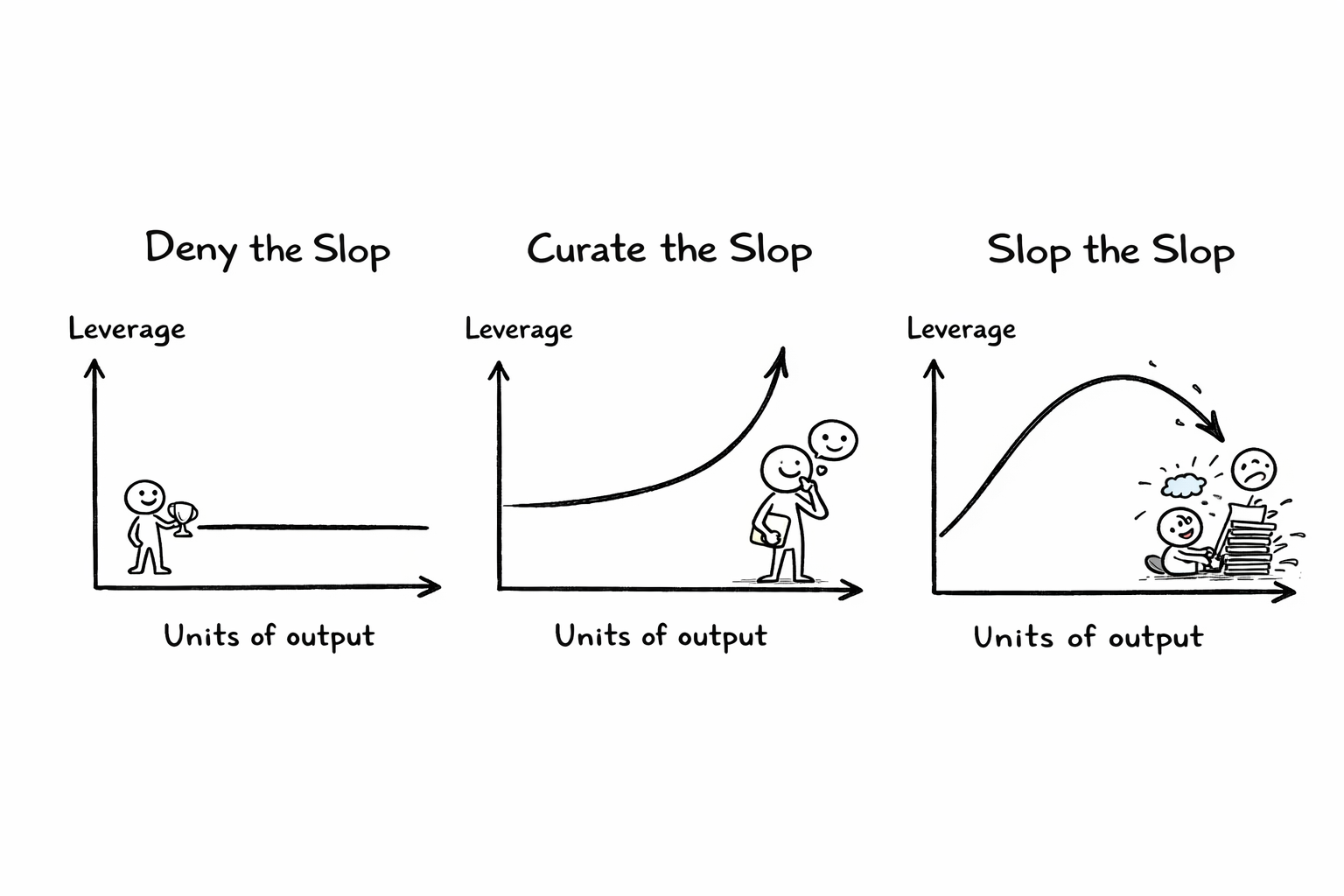

let me explain the diagram with two scenarios.

scenario 1: researchers

Researcher A slops the slop, researcher B harnesses the slop

two people with the same baseline ability, same access to AI tools, and the same goals. both of them want to do insight-dense research and writing. both of them are reading papers using AI for summaries and drafts.

the difference is purely in how they attempt to bend the slope.

Researcher A slops the slop - reads everything, skims aggressively, uses AI to summarize heaps of papers and publishes quickly. the output count is high, this feels productive and early on it works. and moving fast leverages up, the AI is making them faster.

under the surface, however, they're not building durable mental models. the synthesis is shallow, and so is the writing. the writing isn't thinking. they need more and more input to stay at the same pace, eventually the writing plateaus,

Researcher B takes the middle path - harnessing the slop monster to make more good shit at scale.

they use their tools selectively as 'tools of thought' to reduce friction while they still continue to externalize ideas, build their own mental models, form their own opinions. they force synthesis, look for interesting connections, and grapple even when it feels a bit slower and a bit gnarlier. they publish less, maybe they even feel like they fell behind.

but the thing they're building underneath is more robust and durable. conceptual scaffolding, reusable frameworks, taste. each new piece of research is curated and organised carefully into their own web and ontology. AI becomes a multiplier, not a crutch. over time their writing gets sharper, they can say new things with fewer inputs. this is the compounding effect.

scenario 2: engineers

apply the same thing to two SWE's.

Engineer A is absolutely token-maxing. minimum of 13 parallel claudes through the day - if he hasn't woken up to 47 commits and a triple figure token bill in the morning, something has gone wrong. prompts aggressively, ships fast, no longer even takes time to understand the codebase. the velocity, and the bend look great initially.

but they're not building any real understanding of the architecture. the abstractions are fragile and there's a disconnect between understanding what the user wants and how this might translate into the structure and scaffolding of the app.

Engineer B uses AI more as a thought partner. they still use the tools to generate the code, but they produce less code initially, because they're spending more time thinking in systems, understanding the code. slower, more visible output early on.

over time, they build and compound better mental models of the system, cleaner abstractions, intuitions about failure modes. their prompts get better, and so the AI outputs get better, they can predict bugs before they exist.

high-taste, critical thinking and curation

at the end of the day, whether you're talking about scaling a media empire, or individually scaling your work - it's the same thing.

high-taste, critical thinking and curation.

in a world of increasing underlying intelligence, how do you ensure you're harnessing the machine to focus on and scale the most important stuff?

i even think about this in terms of how we consume content. there's a sloppy approach to consuming content, and there's a high-taste, high leverage way to consume content.

you can mindlessly and passively absorb content/slop, and feel like you're learning (the passive consumption trap). or you can use the available tools and intelligence to really understand and engage with the material, externalise your thinking, grapple with the ideas, steelman, strawman, etc.

bending the slope of slop won't be easy, and it will require a collective effort.